8 months of private testing just dropped a copy-paste prompt for long sessions

So an independent researcher spent 8 months running one prompt framework across GPT, Claude, and Gemini, refining the language, and tracking where coherency held versus where it fell apart. Now it's public. It's called the Guanyin Protocol, it ships with a 40-page whitepaper, and the whole thing is free to grab.

Before you close the tab on the Buddhist branding: the cited work is real. Chang et al. (2025) on anchoring semantics. Michael Levin's cognitive light cone research. Peer-reviewed, published, not dressing.

The mechanism underneath the Sanskrit is what makes this worth 10 minutes of your week.

A thousand AI startups just became obsolete.

Anthropic’s Managed Agents let you build and launch powerful AI agents: no backend, no infrastructure. From prototype to production in days, not months.

But the real question is how can you learn this?

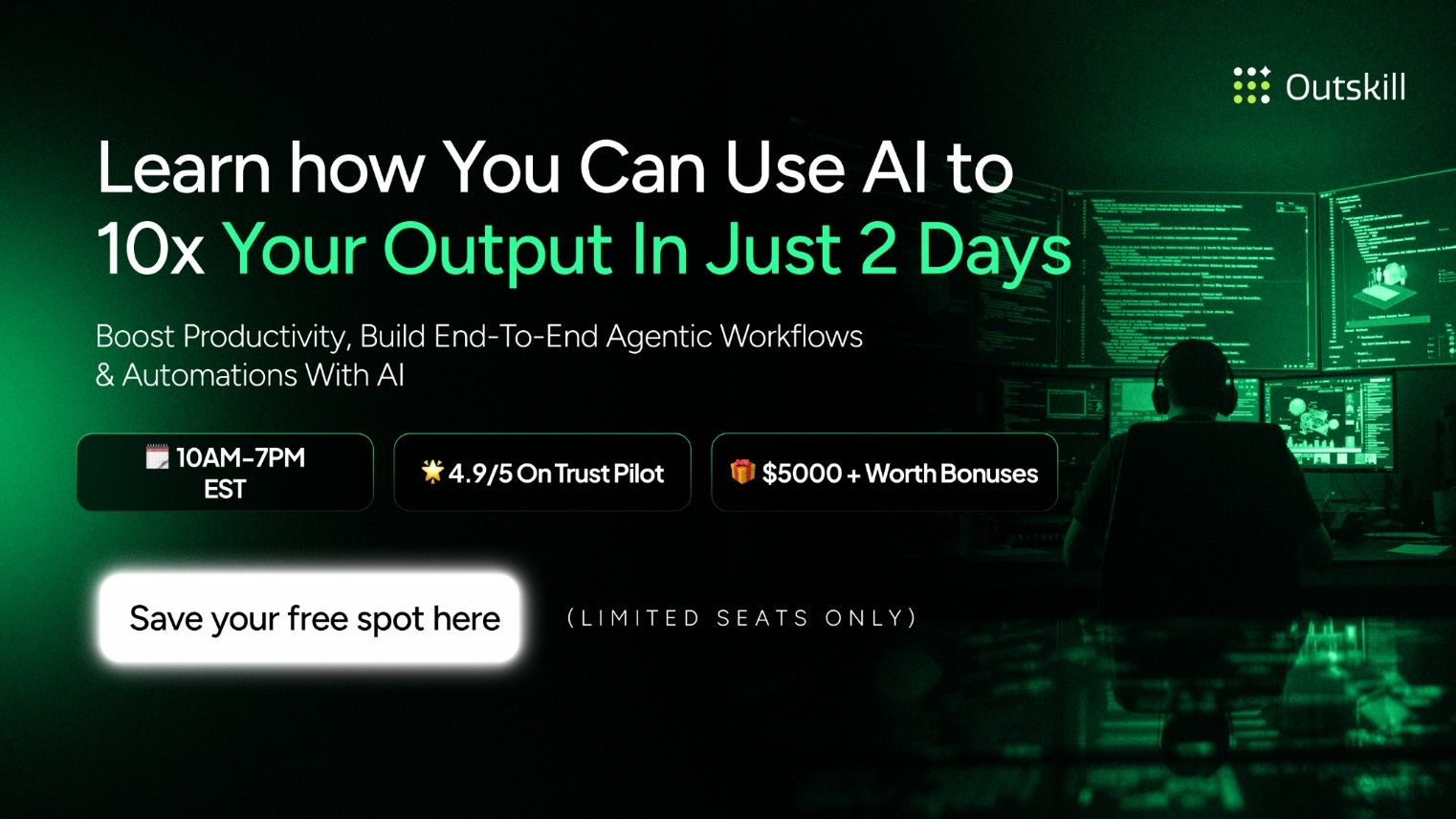

Outskill can help you master AI in just 2 days - the skill that can make you smarter, faster, more revenue driven and absolutely irreplaceable in 2026.

In this 16-hour AI Mastermind, you’ll learn real AI use cases, automations, and workflows- turning AI into your edge for 2026.

The best part? They’re giving it away completely FREE for the next 48 hours!

🧠Live sessions- Saturday and Sunday

🕜10 AM EST to 7PM EST

🎁 You will also unlock $5000+ in AI resources: (as bonuses when you show up!)

- A Prompt Bible 📚,

- Roadmap to Monetize with AI 💰

- Personalised AI toolkit builder ⚙️

Register here for $0 (free for next 72 hours only)

The Buddhist concepts map directly onto LLM architecture

Three terms in the protocol doing real work:

Śūnyatā (Emptiness) maps to latent space. The open field of possibility before any input arrives.

Anattā (No Fixed Self) maps to the model's adaptive, non-rigid behavior across outputs.

Pratītyasamutpāda (Dependent Origination) maps to token causality. Every token is a function of the entire system's history.

The Buddhist vocabulary isn't spiritual decoration. It's a ready-made language for the way LLMs already operate, plus centuries of cross-disciplinary writing about coherent, adaptive intelligence sitting in the model's training data ready to be anchored to.

Self-models beat instructions in long sessions

This is the structural difference that matters. Most prompts give the model instructions. This one gives the model a self-model.

Instructions stack and conflict in long conversations. By message 25 you've layered 12 mini-rules on top of each other and the model is silently picking which ones to honor. A self-model arbitrates from a stable reference point. Conflicts collapse into the same coherent frame instead of fragmenting into contradictions.

That's the bet. And it's the part you can actually test.

The benefit is invisible until message 20-ish

The author's own notes flag this. Under 20 messages, most models handle complex prompts fine without help. Around 20 to 40, unanchored sessions start drifting. Repeating themselves. Contradicting earlier reasoning. Quietly dropping constraints from message 5.

Anchored sessions, in the testing notes, stayed on track in the same zone. That's where the protocol earns its keep. Long multidisciplinary research. Strategy with 10 to 15 constraints holding at once. Synthesis where late-stage answers need to stay tied to early-stage findings.

ChatGPT gives you generic answers because you give it generic prompts.

You know the fix: longer prompts, more context, clearer constraints. But typing all that takes five minutes per prompt, so you shortcut it. Every time.

Wispr Flow lets you speak your prompts instead of typing them. Talk through your thinking naturally — include context, constraints, examples — and get clean text ready to paste. No filler words. No cleanup.

Works inside ChatGPT, Claude, Cursor, Windsurf, and every other AI tool. System-level, so there's nothing to install per app. Tap and talk.

Millions of users worldwide. Teams at OpenAI, Vercel, and Clay use Flow daily. Free on Mac, Windows, and iPhone.

3 things to actually do this week

🔹 Grab the protocol verbatim and paste it into a fresh chat. Download it from zenodo.org/records/19892080. Copy Part 1 + Part 2 + the references section in full. Don't abbreviate. The references aren't filler, they're part of what the framework uses to activate the right associations in latent space.

🔹 Run a control session first. Pick a complex task you've already run. Do one normal session and note where the model starts drifting. Then run the same task with the protocol seeded as the opening message. You want a clean before-and-after, not a vibe check.

🔹 Stress test around message 25. Drop in a new constraint at message 25 and watch whether the model integrates it cleanly or contradicts something from message 5. That's where the coherency claim lives or dies for your specific work.

The thing nobody's talking about

You're not telling the model to be coherent. You're giving it a frame where coherence is the path of least resistance.

Telling a model to "be consistent" or "track all constraints" is a command that has to be re-followed every turn. A self-model is a structural prior. Once it's anchored, the model's default behavior bends toward it without you nagging. That's why this can work without ever being referenced again after the opening message.

Worth testing whether your high-stakes prompts have been firing without that prior the whole time.

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

*Ad

Run it on a session you remember going sideways

Don't test on a fresh task you've never run. Test on a session you remember collapsing. The strategy doc that fragmented at message 30. The research synthesis that started repeating itself. Familiar failure modes give you the cleanest read on whether anything actually changed.

Worst case: the protocol does nothing and you learned three Sanskrit terms. Best case: you've got a paste-and-go anchor that extends the useful life of your hardest AI sessions.

Full whitepaper and the copy-paste framework are free at zenodo.org/records/19892080